LogicTronix is partnering with AMD, Inc. for ML acceleration targeting to Versal AI Core and AI Edge based platforms, currently we are harnessing compute power of VCK190, VEK280 and custom Versal carrier platforms for AI and ML workloads for multi-sensor, high resolution applications targeted for Automotive(ADAS/AD), Defense , SmartCity(Vision), Medical Imaging etc. These AI and ML acceleration development can be migrated to any devices around Versal AI Core and AI edge.

AMD Versal stands in unique position for ML workload handling for multi-sensor and multi-platform solution which is one of demanding in Automotive and Medical Imaging field along with many other non-ML workloads like Sensor Processing, industrial Robotics Platform, Communication etc.

AMD provides the Vitis AI framework for deploying AI/ML models on the Versal platform. By leveraging the Neural Processing Unit (NPU) IP along with the Vitis AI toolchain, developers can quantize, compile, and deploy models from PyTorch, TensorFlow, ONNX, or other floating-point formats onto Versal boards.

Know More about the VitisAI 6.1 and NPU – https://vitisai.docs.amd.com/en/latest/

LogicTronix ADAS Sensor Fusion Solution with Versal is VitisAI-NPU fused solution, which leverages the temporal multi-tenancy to executes multiple models on the same NPU IP. While we also deployed custom CNN networks in the fabric to get higher performance and accuracy.

Figure: ADAS Sensor Fusion Solution with Versal Adaptive SoC , AI Engines and Vitis AI NPU

Learn more about the LogicTronix ADAS Sensor Fusion Solution powered by AI/ML acceleration:

We use VCK190 , VEK280 and VEK385 Versal Platforms or EVKs as our primary in-house prototyping systems. Using these boards, we have deployed a range of solutions leveraging the Versal NPU, DPU, AI Engine (AIE), and programmable logic (PL). Our work includes implementations for defense and automotive customers, as well as integrations with custom carrier platforms.

If you’re interested in learning more about “LogicTronix’s development in AI/ML acceleration on Versal”, please fill out the contact form in the section below.

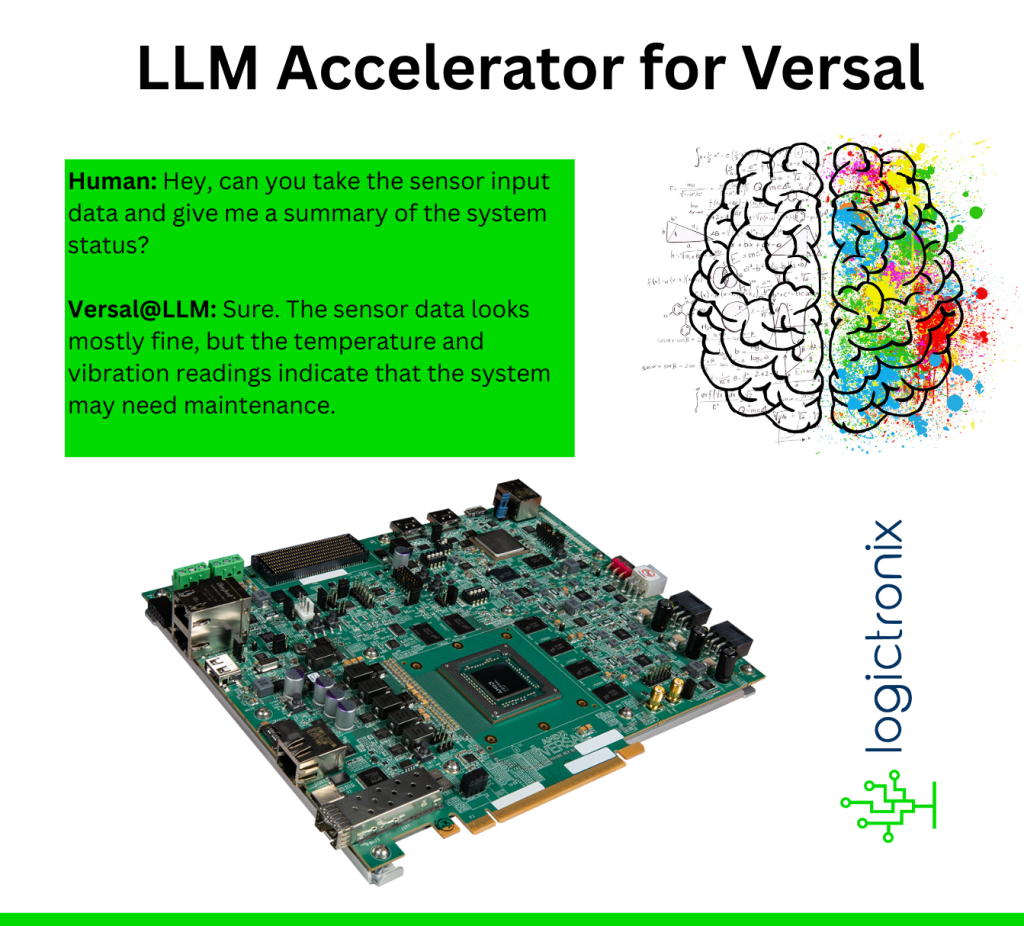

GenAI and LLM Acceleration on Versal Adaptive SoC

Large Language Models (LLMs) unlock new possibilities for next-level human-machine interactions. They enable machines and systems to become more interactive, responsive, and lifelike.

To bring natural language-based experiences to a wide range of industries and applications, we are releasing an LLM accelerator for the AMD-Xilinx Versal Adaptive SoC platform.

LogicTronix’s GenAI/LLM Acceleration solution supports LLM with up to 7B parameters running efficiently on the Versal platform.

Aside of many industrial applications, harnessing LLM and GenAI in AMD-Xilinx Versal ASoC platform we will able to perform:

- Performing Analytical summary of the sensor and input data – example , analyzing the vehicle movement in an edge-AI traffic management system, understanding the sensor or sensor fusion data for properly identifying the maintenance plan.

- Generating the information at edge-AI for better human-machine communication or better representation of the information.

Like to know more about AI/ML or LLM Acceleration on Versal?

Please fill up the following form for additional information